Deconstructing Google’s Helpful Content Update

Over the past few weeks, MarketMuse co-founder Jeff Coyle and I have been discussing Google’s Helpful Content Update. More like deconstructing it, not literally but figuratively, to better understand what might be happening behind the scenes and its potential impact in both the short and long term. What follows is a summary of those conversations.

What is Google’s Helpful Content Update?

As self-reported, the Helpful Content Update introduces a site-wide signal powered by an automated continuously running machine learning classifier. It aims to detect individual pieces of unhelpful content that can then accumulate and weigh down a site. Although operating at the page level, the classifier contributes to creating a temporal, weighted state for a site that can glide higher and lower.

There’s a great deal of information contained in that one paragraph, so let’s unpack that first.

Notice the name that Google gave this update. It’s specifically labeled as the “Helpful Content Update”, not the “AI-generated Content Update.” It’s a subtle yet critical distinction because what’s important is not how the content was created, but whether or not it’s useful. Ultimately, the search engine wants to surface quality content and this approach adds another layer of analysis – far better than trying to determine whether humans or machines generate the content.

“I think of the HCU as a living breathing organism. It’s a muscle that is going to keep growing and I think have more of an impact as time goes on as compared to what we’ve seen until now. To me, Google has to develop something like this not just to combat “SEO content” of that past but to deal with many marketers thinking that AI writers can spin up content across the entire site. So while I don’t think it’s specifically about AI content, like most machine learning properties it’s about profiling content, and I would have to believe that the intent is to include at least long-form AI written content into that profile.”

It’s a site-wide signal which indicates that good content can be hurt by bad content. Think of this as having a governor (speed limiter) applied to all your content. Literally, it’s a weight holding your content down, preventing good pages from achieving their true potential. It’s one score in an ensemble of scores, but if you’re labeled a bad site due to the amount of unhelpful content, all your pages wear that scarlet letter.

The signal is generated automatically, meaning that there is no manual action taken. Once the issue has been identified, it will take time to experience any change. There’s no one to whom you can reach out to have this lifted. According to Google, the signal could apply for a period of months.

Most likely it’s a page-level (not topic level) binary classifier, however Jeff thinks there’s a distinct possibility that it’s a composite classifier, so let’s keep that in mind. In any case, that gets rolled up to the site level, creating a weighted grid of helpful/not helpful pages. At the site-level, this is most likely a composite along with a collection of grades per topic/keyword.

They’ve said that “the signal is also weighted; sites with lots of unhelpful content may notice a stronger effect.” But we don’t know what ‘lots’ means in this context. Is the threshold an absolute number or a relative percentage?

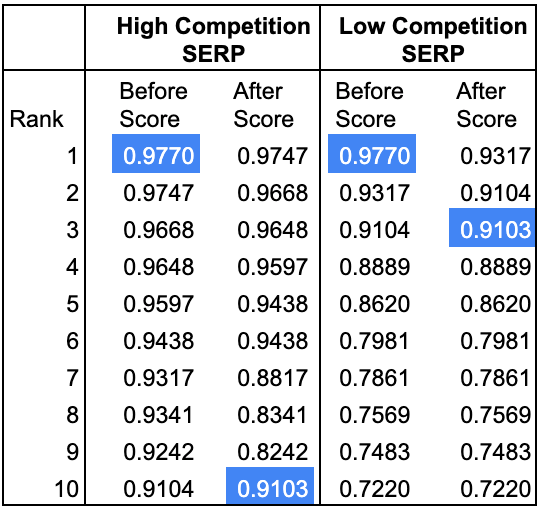

Google has also said that “some people-first content on sites classified as having unhelpful content could still rank well, if there are other signals identifying that people-first content as helpful and relevant to a query.” So we can surmise that they’re reducing the ranking score of an individual page based on that site-wide signal. For highly competitive search terms (with little variation in the ranking score of the top results) this could have a significant impact. For non-competitive terms, the change in rank may be minor if the individual ranking scores vary widely – the change in score will have less effect on a page’s position in the SERP.

In the example above (created for illustrative purposes) you can see how significant the change can be, depending on whether a page is competing for a topic ranking in a highly competitive SERP environment or not.

Is Google’s Helpful Content Update Significant?

A lot of SEO pundits are of the opinion, based on empirical evidence, that this update is having a minor impact. But I don’t think you can make that assertion for a very simple reason. Very few people will ever admit that their site has been affected by this update, because that’s like saying your baby is ugly. All parents have beautiful babies and everyone’s content is helpful. As Jeff points out, “multiple polls on prominent SEO facebook groups would support this ‘reporting bias’ where 80% of respondents said this is flat or they went up…..very few will report their failure.”

More importantly, this update is not a boost, it’s a weight. As Jeff explains, “the most familiar major quality-related update occurred between 2011 and 2015, before being incorporated into the core algorithm in early 2016.” Panda used machine learning to pass in hypotheses, test them using various metrics, and generated conclusions which were verified by humans. Let’s also be mindful that the update is built on a machine-learning classifier, so it will continue to expand and improve on its own.

“I believe Google started small with the new Helpful Content Update classifier. There is a fine line between unhelpful content that was created specifically for SEO benefit and content that can may actually be perceived as helpful to humans. I imagine Google didn’t want to go overboard by penalizing sites whose content could be seen as helpful (even if not by everyone, by some people). Because Google is using machine learning to further improve upon this classifier, it’s possible we will continue to see the impacts of this update expand and affect more types of content over time as Google gets smarter. The only problem is, we won’t necessarily know when Google is using it and to what extent, which will make it impossible to analyze without further confirmations from Google.”

Keep in mind that an unhelpful page is going to hurt itself through other mechanisms for ranking. It’s not going to rank well for all the other reasons why pages do well. Plus, it’s not going to do well as a result of this update. And it’s going to affect all the other pages on the site – so it’s like a double whammy.

The general consensus (lots of research out there confirms this) is that, for this initial roll-out, Google has set the bar for helpful content low to initially catch the worst of the garbage – expect that to change. So right now, they’re catching the most obvious signals, but overtime they’ll feed in additional hypotheses and begin ratcheting it up.

Still, the key is that this move by Google is unprecedented. They’re using page-level analysis, rolling that up and applying it to the entire site. Now a couple of bad apples can spoil the whole bunch, so to speak.

This means you’ll need to re-evaluate using AI to scale low business-value content while leaving the high-value stuff to humans. There’s too much risk in using AI generated content “as is”, but let’s leave that for another discussion.

Diagnosing and Fixing Unhelpful Pages

No sooner did Google announce this update, SEOs of all stripes were quick to offer their opinions on what action to take. While their one-dimensional sound bites may make it seem simple, I think reality will prove otherwise.

Here are a few ideas already making the rounds that you need to be wary of.

- There’s a magical number for the percentages of intent (top, middle, bottom of funnel) that your content should address. There probably are percentages, but it’s different for every concept. If you have 100% content focused on one stage of the funnel, that’s probably bad. Although you’ll want to create content to fit the other stages, there is no magic percentage. Jeff cautions that “Any SEO firm or practitioner claiming that there is a percentage overall and not at the topic level is giving you bad advice.”

- Look for pages with low word count, traffic, and link metrics. Here’s the problem with this approach. Google’s advice about avoiding creating content for search engines first does not mention links metrics. Their discussion of traffic only revolves around solely targeting high-traffic terms. And in talking about word count, there’s no mention of low word count being an issue.

- Remove your AI-generated content or obfuscate it because Google can detect this type of content. Keep in mind they didn’t call their update the “AI-generated content update.” Content can be unhelpful, even if it’s written entirely without automation. In other words, says Jeff, “Unmanaged, spun content is as much of a ticking time bomb as unmanaged-generated content —- or $0.01 per word content purchased from a farm.”

If you are using MarketMuse, look at your Topic Authority, Page Authority, and Content Score. Currently, this offers the best possibility to assess your content and know where you may need to dig in and take action.

Keep in mind that it’s possible that what appears to be an unhelpful content issue could be a flux in topic authority or link authority. If you suspect that it’s a case of topic/site section authority score degradation, there is a way to find out. But whatever you do, don’t make a page that was about one thing into a page about many things with different intents that are illogically combined.

That won’t work. Instead, do this. Make sure the affected page is bulletproof for those terms driving the most traffic. Look at the list of terms that it ranked for historically to identify those that have a slight intent mismatch. If you have a page targeting that, link to it and improve it. Otherwise, create a supporting page.

Research variants you haven’t covered and use MarketMuse Compete to find other related topics not covered. Build out a supporting cluster of content, making sure to link from the main page to the other in a mother/child relationship. Publish the entire cluster simultaneously (usually about 5 to 10 pages) and wait two to three weeks. If it is a case of topic/site section authority score degradation, then you’ll reclaim your rankings and see the parent page creep upward.

Machine Learning and Its Impact on Google’s Helpful Content Update

If you have a good grasp of machine learning, you’ll immediately understand the multidimensional approach Google has taken to the Helpful Content Update. Otherwise, you’re probably wondering what a machine-learning model is. What is the impact?

Machine learning analyzes data using specialized algorithms and statistical models to draw inferences from the patterns it finds. It’s able to learn and adapt without following explicit instructions, as required through traditional programming.

Neural networks are a type of machine learning algorithm frequently commonly used in these applications. A network such as this can consist of thousands of cells or nodes that are interconnected and organized into layers. Each cell processes inputs and produces an output that is sent to the other nodes to which it is connected. Inputs can be weighted which in turn may affect a node’s output and, in turn, the final result.

Google already uses neural nets to understand subtopics of a subject. With the Helpful Content Update, multiple inputs within the net are used to analyze and determine whether a page is helpful or unhelpful. Some inputs may be more important than others, depending on the context. Keep these points in mind for the next section where we look at what process Google may use.

Here’s What It Looks Like to Google When You Aren’t an Expert and You’re Not Helpful

We know that Google has created a neural network that can take multiple inputs and weight those signals to determine whether a page is helpful or not. Then it rolls that up to the site level and applies a weight if the amount of unhelpful content crosses an undisclosed threshold.

Now the question is, what sort of signals are these?

“Google has placed an emphasis on putting out useful content for years, and they continue to work towards prioritizing this in search engine results. I believe they are going to place much higher importance on the credibility behind an author or company. This topical authority will have a great deal of influence, so stronger bios or companies with high authority will be able to have more impact on search rankings. So for best results, it’s important to consider the relevancy of who is writing the content, if it is answering the question the user needs, and if the content is optimized with best practices.”

Before we look at that, we need to take a slight detour to discuss topic/site section and topic/site level authoritativeness scores. Although it’s computationally significant, this is well within the realm of Google’s present-day capabilities. Authority is different from Page Rank, removing the potential popularity bias (e.g. an entertainment site may get more links on a medical article even though it’s not the most authoritative).

Let’s assume there’s a site and site-section authority score for every topic covered by a page. Imagine pools of topics semantically related, forming a blob, each having an authoritativeness score.

Google figures out the structure of your site through internal links. If you’re powerful on a blob of words, linking to something else gives hints that this is related – all of that is intertwined. Now you’ve got your page-level quality scoring where you’re parsing the page into chunks and figuring out the meat of the content, the links, etc.

Let’s say you’re an entertainment site (think gossip columnist) and suddenly you’ve got a page covering a medical topic. Your page doesn’t exhibit expertise. Your site is about a bunch of different and very semantically irregular things. People visit your site and then quickly bounce back to Google and click on similar intent pages or continue their research on something they thought was going to be answered.

They’ve either said they’ve had a bad experience or shown this through their actions. Maybe:

- You don’t have images or use stock imagery.

- You have lots of content that’s low quality.

- Your collection of content is similar to that which connects to Google search volume or trending topics.

- You’re potentially detected as plagiarizing, spinning or some form of automated content creation.

- You routinely use a lot of generic statements or odd phrases indicating you don’t have experience.

- You have a similar word count across many pages.

- Your depth of content is very low (think MarketMuse Content Score).

- You have content that doesn’t answer the question promised.

So all of these things that get triggered contribute to a composite score at the page-level and are referenced against the total number of unhelpful pages. Now there has to be a word pool for each page analysis to be able to classify it as unhelpful. Because what if Google isn’t giving you a result that is good in the first place?

They probably look at the words that the page has some level of authority on. Jeff is quick to point out how important this is, “One has to think of every page as the sum of the authority it has on every topic-page combination.” And then they judge the actions. Because you can’t just take a page randomly and look at every way people would access it. What if Google is ranking it highly for something it’s not about?

Now let’s tie this all back into the “warning signs” that Google talks about in What creators should know about Google’s helpful content update and see how this could map out.

Do you have an existing or intended audience for your business or site that would find the content useful if they came directly to you?

This relates closely to the concept of authority as discussed earlier. Remember the idea of a popular entertainment site not necessarily being the most authoritative source.

Does your content clearly demonstrate first-hand expertise and a depth of knowledge (for example, expertise that comes from having actually used a product or service, or visiting a place)?

Click models could help in discerning this. Google’s patent for information gain could also be helpful in this regard as someone lacking depth of knowledge would not add anything to the conversation.

Does your site have a primary purpose or focus?

This is similar to the authority classifier described earlier and associated with site type understanding and site section awareness.

After reading your content, will someone leave feeling they’ve learned enough about a topic to help achieve their goal?

Click models and click patterns could help in determining this outcome.

Will someone reading your content leave feeling like they’ve had a satisfying experience?

Continuing to search on the same topic would indicate otherwise. “Especially,” as Jeff says, “if other sites in the competitive landscape lead to terminal sessions that indicate that they are more satisfied by them than you.”

Are you producing lots of content on different topics in hopes that some of it might perform well in search results?

Low authoritativeness on many topics could be an indicator.

Are you using extensive automation to produce content on many topics?

Most likely using some sort of ensemble method for detection, Automatic Detection of Machine Generated Text: A Critical Survey provides an overview of the challenges in this area.

Are you mainly summarizing what others have to say without adding much value?

Once again, Google’s patent for information gain could help here, although they may employ an ensemble method for rephrasing or detection.

Are you writing about things simply because they seem trending and not because you’d write about them otherwise for your existing audience?

Google could look at cluster development or the lack thereof as a signal. More likely, they just do a search volume sort descend to see if you’re mostly covering the latest trends.

Does your content leave readers feeling like they need to search again to get better information from other sources?

Continued search would be a strong signal that this is taking place.

Are you writing to a particular word count because you’ve heard or read that Google has a preferred word count?

Detecting a pattern where word count is oddly similar to the average, or pages have roughly the same word count.

Did you decide to enter some niche topic area without any real expertise, but instead mainly because you thought you’d get search traffic?

Page quality analysis where many pages exhibit low quality in breadth and depth of coverage (think MarketMuse Content Score). This is a good way to weed out AI-generated (GPT-3) content as well. See GPT-3 Exposed: Behind the Smoke and Mirrors.

As well, Jeff adds, “If your site only has pages that align with keywords with search volume (think sort descend by Google’s data) be aware that that is a very odd signal.”

Does your content promise to answer a question that actually has no answer, such as suggesting there’s a release date for a product, movie, or TV show when one isn’t confirmed?

Google’s detecting the answer to certain things based on intent.

Takeaways

This update from Google doesn’t just target AI-generated content, it targets any content that is deemed unhelpful, regardless of who or what created it. Although Google is conducting page level analysis, the results get rolled up to the site level. If you exceed an undetermined amount of unhelpful content, your entire site is weighed down. Correcting the problem won’t yield any results for months as the entire process is automated.

There is no one aspect that defines unhelpful content, rather it’s a multitude of signals that contribute to that designation. Those signals are processed using machine learning, meaning Google can learn and adapt its ability to identify helpful content.

“The tough part about a site level weight,” declares Jeff, “is you never REALLY know that it is impacting you until G tells you this is the reason you are degrading. A smart test plan is the way back if you think you have been impacted by this or another publicly announced update.” Keep in mind that the bar for determining helpful content is probably set low by intention and you should expect it to rise over time.

MarketMuse evaluates quality at the site-topic combination level at scale. The best protection is knowledge. Book a demo and learn whether this update is an advantage for you or a risk.

What you should do now

When you’re ready… here are 3 ways we can help you publish better content, faster:

- Book time with MarketMuse Schedule a live demo with one of our strategists to see how MarketMuse can help your team reach their content goals.

- If you’d like to learn how to create better content faster, visit our blog. It’s full of resources to help scale content.

- If you know another marketer who’d enjoy reading this page, share it with them via email, LinkedIn, Twitter, or Facebook.

Stephen leads the content strategy blog for MarketMuse, an AI-powered Content Intelligence and Strategy Platform. You can connect with him on social or his personal blog.