What Is Content Score?

There’s an old adage that you can’t improve what you don’t measure. But just because you measure something doesn’t mean you can improve it.

There’s no shortage of ways to score content. Some simple, some not so. But just because something is complicated doesn’t automatically make it useful.

In this post, we look at what is content score, the different ways of measuring content quality and how content marketing benefits from well-designed scoring systems.

Benefits of a Content Scorecard

Without some method of keeping score, it’s hard to know what’s working, what’s not, and what will. It’s virtually impossible to determine the situation causing any of these cases.

At its best, content scoring encourages the creation of high-quality content. Churning out as much content as possible is no answer to your content marketing woes. Nobody likes bad content. Quality content that answers a searcher’s intent is the type of content required for ranking high in organic search results.

Scoring content provides a benchmark and an objective goal for which to aim. Otherwise, you’re shooting from the hip and basing your judgment on gut feeling. That approach is not scalable.

Proper content scoring helps everyone on your team “get it right” the first time. Establishing a minimum passing score is a step toward publishing in-depth content of the highest quality.

But good scoring isn’t a vanity metric like the number of retweets. Vanity metrics are poor predictors of success, however you wish to define it.

What Defines a Good Content Quality Score Methodology

Implemented correctly, content scoring can be a good way to forecast a favorable outcome. Unfortunately, this isn’t always the case. In fact, the scoring process is often one that ranks and tracks instead of predicts.

Imagine using the percentage of visitors that downloaded an asset as part of a content score. In this case, the more downloads the better the score.

You get a high score. That’s great! But how does that help determine whether a new piece of content will perform just as well?

It doesn’t.

What if you use traffic as an indicator in your score report? Same thing.

Bounce rate? Ditto.

All these factors don’t help in determining content validity. They provide absolutely zero insight into why something performs the way it does and more importantly, how it can be improved.

Look at this another way.

As I write this post, the Toronto Blue Jays rank fourth in the American League East with 65 wins and 80 losses. Although that raw score tells them where they stand, it doesn’t tell them what they need to do to win more games.

In content marketing, we need scoring methods that help us win. Simply knowing that our content is good or bad, based on a particular score, is not good enough.

A good scoring methodology should encourage an appropriate action.

Different Ways of Scoring Content

We’ve already discussed some metrics that Google Analytics provides. But using them as a content scoring method isn’t very helpful since the score doesn’t help improve the content. Knowing a blog post gets lots of traffic is far different from knowing how to get more traffic to that particular post.

Even using a data point like traffic to create sophisticated trajectories still doesn’t help figure out the “why.” In marketing, we need to know why something is happening. We also need to understand how to duplicate it if it’s good or fix it if there’s a problem.

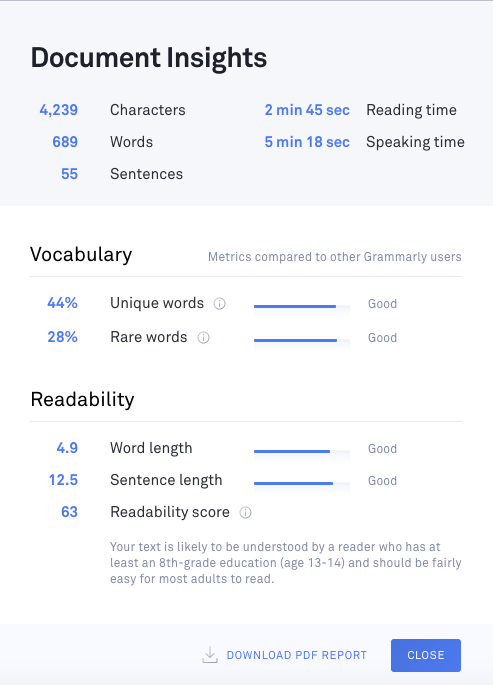

Take this example. The Flesch-Kincaid readability test shows how difficult a passage is to understand. Its formula is calculated based on the total words, total sentences, and total syllables.

The more points the better. It means the content is easier to read. Clarity is always good for your audience.

There are SEO benefits as well, including reduced bounce rate and increased time spent on-page.

Want a better readability score? Simple. Reduce your word length and sentence length. But there’s more to good content than readability.

You could try manually scoring your content, as I discussed in How to Create Great Content That Ranks. But it’s crazy the amount of time that’s required to do this. So it’s really not a viable alternative.

The Value of Content Scoring and How Acrolinx Delivers It

Content is pivotal to the relationship between a business and its customers. But awful content does a lot more harm than good.

Content that’s off-strategy, off-brand, poor quality, and inconsistent can cause confusion and anger the people who read it. Something no business wants.

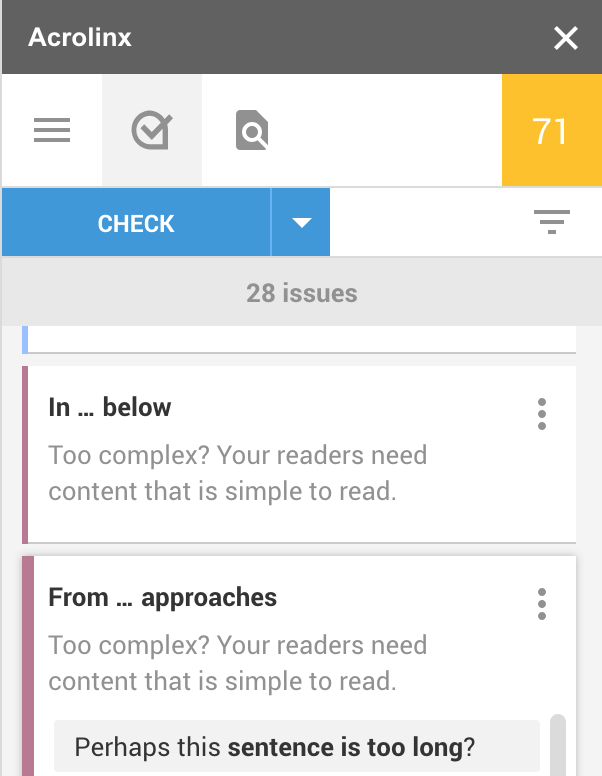

But luckily, there’s a way to gauge content health before publishing. It’s called Acrolinx content scoring.

Acrolinx is an AI-powered platform that helps enterprises align their content to their strategy so they can meet their goals. A key part of ensuring alignment is the Acrolinx Score. It’s a number that represents the degree to which a given piece (or body) of content complies with an organization’s strategy and guidelines.

It’s a quick, easy way to measure, track, and compare content quality, impact, and performance. The higher the Acrolinx score, the higher the caliber of the content.

Here’s how it works.

First, Acrolinx captures your organization’s requirements for tone, style, and terminology. Then, as content creators develop content, it gives them in-line feedback, including an Acrolinx Score. Through other analytics, Acrolinx then provides deeper insights into your content and how it will perform, including content comparisons, trend analyses, quality and improvement metrics, and more.

With Acrolinx content scoring, you can be sure the content your organization creates not only represents your strategy but also reflects the standards of your brand.

You can learn more at acrolinx.com.

How MarketMuse Scores Content

Highly-readable and on-brand content is part of the content quality equation. But you also need to determine how well your material covers a given topic. Is it representative of your topical expertise, or is it just marketing fluff?

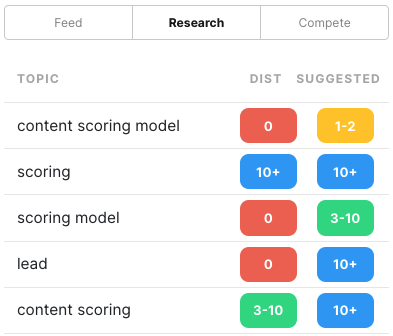

MarketMuse content scoring model score enables large-scale assessment of content quality. In this manner, you can adopt an objective score to find and improve low-quality content.

Here’s how it works.

For any given focus topic, MarketMuse generates a model. Contained within that representation are numerous subtopics and their relationship to the main theme, including data concerning their distribution within the content.

MarketMuse analyzes your content, compares it to the model and assigns a score. In-depth content that offers detailed coverage of relevant subtopics receives a higher total score than shallow pages that provide superficial treatment. With MarketMuse you can quickly conduct a content audit to determine what pages need improvement. You can compare writers against topics to see who naturally more in-depth content on which subjects.

Think of the scoring model as a relative assessment, comparing your content to that of your SERP competitors. With our scoring system, although you can achieve a theoretical score of 100, that doesn’t mean your content piece is perfect. There’s always room for improvement.

Here’s what Matt Cook at VacationsMadeEasy had to say. “I pulled a list of 100 pages from SEMRush where we were ranking page 2. I gave the 100 to my team and had them use MarketMuse on 50 of them, and nothing on the other 50. The results were astonishing. Forty-five of the 50 pages where MarketMuse was used improved significantly, while only 2 of the pages that weren’t worked on improved at all.”

However you choose to score your content, make sure that achieving a high score brings about a successful end result. Just as important, you need to know exactly how to improve your score. Otherwise, what you measure can’t necessarily be improved. At least, not in any way that can be scaled.

In the end, a good scoring system encourages specific action that generates a positive outcome.

If you’d like to learn how to create better content faster.

Visit our blog.

If you know another marketer who’d enjoy reading this page, share it with them via email, LinkedIn, Twitter, or Facebook.Stephen leads the content strategy blog for MarketMuse, an AI-powered Content Intelligence and Strategy Platform. You can connect with him on social or his personal blog.