Cheap SEO Tools – Buyer Beware!

More content. Better content. Faster.

That is every content strategist’s dream. If you could get the budget, you’d be all set to dominate the search engines!

Over the past 12 to 18 months, several inexpensive/free tools have appeared in an attempt to answer that dream.

Unfortunately, it could be the beginning of a terrible nightmare. Here’s why.

Poor Data Quality

Every inexpensive SEO tool I’ve encountered suffers from poor data quality. Before we get into details about why this serious problem is so dangerous, let’s clarify what we mean by the term “poor data quality.” An example will serve best.

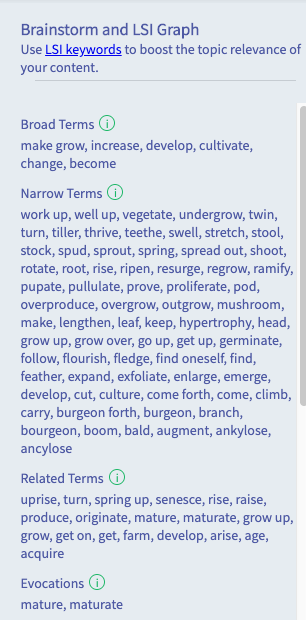

Sometimes it’s just obvious, as in this example from a free LSI keyword tool. You can tell right away that this list of “LSI keywords” for the search term “how to grow an avocado” isn’t going to be of any help in creating best-in-class-content.

There are a host of issues in using LSI, too much to get into right here. For more details, refer to this post on the problem with latent semantic indexing.

Data that poor is actually a blessing in disguise. It’s obviously not helpful, so you won’t rely on it.

The next example is a little more insidious. Take a look at the screenshot below, that comes from a tool which shall remain nameless. At first glance, the data looks fine.

But let’s think about this for a second. The subject of our article is “how to grow an avocado,” so we’ll probably need to talk about topics like:

- Different varieties of avocado (maybe some are easier to grow than others)

- Temperature requirement

- Watering

- Soil

- Light requirements

- Spacing and height

Referring back to the example, you’ll notice that the list is absent of all the topics pertinent to the subject of growing an avocado. Instead, what we have is a list of keywords that are generally associated with avocados.

This seemingly slight difference is critical. Follow these suggestions, and you’ll end up with a generic article on avocados, with some additional information about their culinary use and health benefits.

But you won’t have what you were aiming to produce; specifically, an article on growing avocados. That’s the danger inherent in cheap solutions. Relying on their bad data can lead your SEO efforts seriously astray.

Lack of Topic Specificity

Low-priced SEO content optimization tools need to take shortcuts to save money. Instead of analyzing hundreds of thousands of pages to service each query, they will scan maybe 10 to 20. Consequently, they face serious challenges when topics get very specific, and when there is user intent fracture in the competitive landscape.

Let’s use this topic as an example, “How does the petroleum Industry deal with solids control and waste management of their liquid slurry.”

While some may call this a very long-tail keyword, I consider it to be a very specific topic. You’ll need to examine more than just the first couple of pages in Google if you want to craft truly expert-level content on this subject.

The dangerous part is that you don’t know where the tool crosses the line between serving OK data and that which is inferior. It’s hit and miss depending on the term being researched.

Ineffective Semantic Analysis

Inexpensive content optimization tools are heavily focused on keywords. They don’t understand the meaning of words and how they relate. As a result, you can’t successfully position your content in the manner a subject-matter expert would write.

Effectively being able to analyze a page against many topics is key to semantically-focused content optimization. A keyword approach would require multiple reports, and the reports would provide conflicting recommendations.

Substandard Text Analysis

Humans have no problem looking at a page and determining what is the content of the article as opposed to comments or boilerplate text. Not so easy for computer algorithms. The problem is that cheap software doesn’t consistently strip boilerplate from your pages and those of your competitors’. Neither does it provide a solution for overriding that removing to analyze customized blocks of text.

What does that mean?

You’re working with inaccurate and incomplete data, creating content based on false information.

Inferior Competitive Analysis

Let’s be clear here. A letter grade is not an actionable insight. Depending on the result, it may make you feel happy or disappointed, but it doesn’t tell you what needs to be improved.

Presenting information in the form of a heat map, like MarketMuse does, lets you examine the competitor content in detail, providing head-to-head gap analysis at the page level.

Also, a numerical content score, as MarketMuse uses, is more accurate and has a higher resolution than a letter grade. Here’s what I mean. With a numerical score, every change is reflected in your content.

Not so with a letter grade. Your numerical score would have to increase by at least five or six points to see a change in grade.

How come?

Well, six letter grades (A through F), including ‘+’ and ‘-’ for each category, add up to eighteen possible combinations. Mapping that to a score of 100 points, each change in grade equals 5.55 points.

Not very precise, is it?

Absence of Actionable Insight

Taking three third-party APIs, out-of-the-box, and wrapping them up in a nice-looking interface doesn’t make your SEO tool “modern.” It merely limits what you can do, making it hard to offer:

- An on-demand content auditing/inventory solution.

- Tracking of topical coverage, strengths, weaknesses, opportunities at the site level.

- The ability to create comprehensive Content Briefs at-scale.

- Competitive Analysis.

- External Internal Linking Recommendations.

- Inline Content and Draft Optimization.

- Topic-focused Research.

- Intent and Question Analysis.

- Long-form content generation using natural language generation.

All of the above requires a lot more than just a few APIs.

Summary

Cheap SEO tools, with their poor data quality and other associated problems, leads to a missed opportunity cost. Technology is only part of the overall content investment. So you best be sure it’s accurate, usable, and functional.

Buyer beware! What you don’t know can hurt you.

If you’d like to learn how to create better content faster.

Visit our blog.

If you know another marketer who’d enjoy reading this page, share it with them via email, LinkedIn, Twitter, or Facebook.Stephen leads the content strategy blog for MarketMuse, an AI-powered Content Intelligence and Strategy Platform. You can connect with him on social or his personal blog.